Last Updated on June 17, 2019

An SEO audit helps to fine tune your attention to the problems on your website that really matter.

This guide will go over a basic to advanced checklist of how to perform an SEO audit.

It contains example audits for local sites, ecommerce websites, and affiliate / ad revenue websites.

Tools For A Quality SEO Audit

Any audit requires tools.

They simply help to collect data fast, which is especially important for large website audits.

I’ll separate these into the basic audit tools, and the advanced audit tools.

Basic Audit Tools

Google Analytics (free) – used to track traffic by device, location, landing page, and other factors.

Google Search Console (free) – used to track indexing, crawl rates, structured data, and other factors.

Panguin Tool (free) – used to overlay Google updates onto traffic stats, to check for penalties.

Advanced Tools

SEMrush (paid) – rank tracking and keyword research.

Ahrefs (paid) – link analysis and keyword research.

SERP Preview tool from SSM (free) – used for checking limits of page titles and meta descriptions.

Links To Sub-Sections

SEO Audit Definitions / FAQ’s

Before we dive in, here are some quick references for those who might not be familiar with what an audit is, or why they should conduct one.

What is an SEO audit?

An SEO audit is the process of following a checklist or tool (or both) to evaluate how search engine friendly a website is. An SEO audit will consider on page factors (on the website itself) and off page factors (including inbound links and brand search volume). A good SEO audit will consider the crawlability, indexability, and quality score of a website based upon up to date Google ranking factors.

Why do we need an SEO audit?

An SEO audit helps to focus your efforts onto the highest priority areas of your website from an SEO standpoint. It helps you to get the best return on your marketing spend because you focus on what will give you the biggest ranking increases for the least amount of work.

Without an audit, you will often scatter from one SEO problem to another, or pick up the latest “SEO news” post and try to fix your website based upon that. This usually provides mediocre results, when compared to a focused plan based upon a good quality audit.

How much should an SEO audit cost?

The cost of an audit depends on how much depth of information is given and how large the website is.

Personally a typical audit I conduct usually costs in the region of £450 – £850 and provides a lot of detail.

However very large websites will often require longer to dive deep into issues, so you may consider focusing on sitewide technical changes before doing more in-depth analysis to keep the cost down.

How long does an SEO audit take?

A website audit can take in the region of 6-10 hours of work to complete, depending on the depth and size of the site. To be effective the audit must identify key SEO problems with the website strategy, and so ironically the better the website’s SEO, the more expensive an audit will be.

During this checklist I will include “What to note” sections which will provide insights on what you should be noting in your audit report.

Basic (ish) SEO Audit Checklist

Here is the minimum work you should be doing during a basic SEO audit to understand the main problems and opportunities of a website. To skip straight to the advanced section click here.

Quick Trio of Technical

First off, some basic indexing and redirect checks.

HTTPS / www. – 301 Redirects

301 redirects pass search engine value, 302 redirects do not (in most cases), so when moving from http to https or simply choosing www. or non-www. you need to make sure the alternatives all 301 redirect to the main version.

As you might’ve guessed, plenty of developers use 302’s interchangeably without thinking, creating problems.

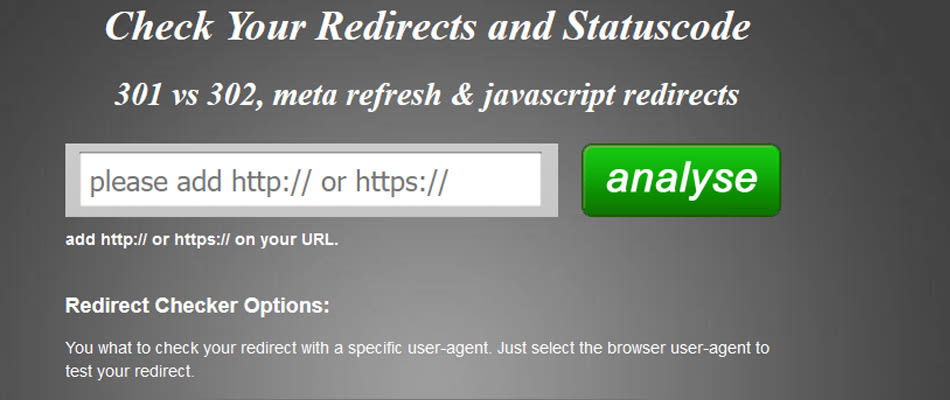

To check these, I like to use this Redirect Checker tool.

To use it, simply add the non-http version of your website into it, and click Analyse, and see if it’s a 301 or 302. Do the same for the other variations to make sure they all redirect correctly (non-www. http, www. http, non-www. https, www. https).

If that redirect tool is ever broken, simply Googling “follow redirect” will find another one that should do.

What to note: Any variations that either don’t redirect, or redirect with a 302 should be noted as such.

Homepage Canonical

Not so much a problem in WordPress, but in other CMS where redirects may require ? at the end or other variables linking to the homepage, having a canonical to show the true version of the homepage prevent duplicate indexing.

It’s really simple, add it to your homepage template in the head:

<link rel=”canonical” href=”https://domain.com/” />

What to note: If the canonical is missing, add a note of the correct tag and how to implement it.

Brand Name Search / site:domain.com = Homepage First

This is a classic sign of some other things being wrong, so it’s a good thing to check starting off. Search for the brand name + modifier if required, and make sure that the website page that shows (if any) is the homepage.

Next so a search for site:domain.com and make sure the homepage is the first in the list.

What to note: If the homepage isn’t showing up first, note what is showing and mark it down for later investigation (perhaps page related penalty, or lack of correct internal linking).

Basic Crawling For Fast On Page Technical Problems

My tool of choice for crawling a website is Screaming Frog, which gives you 500 urls for free (plenty for a basic quick audit).

This will help us identify any urls blocked by the robots.txt file, any missing Page Titles / Meta Descriptions / H1’s / Search Console / Analytics code, and to find any 404 errors or redirect chains.

Simply add the website into Screaming Frog and press start.

Once finished, go to the right hand column and scroll through to the “Response Codes” section.

What to note: Click on the “Blocked by Robots.txt” section, review the pages to check if anything is blocked that should be, if it is, then export them and add a note in the audit saying to remove the block from the robots.txt file.

Next click on the “Client error 4xx”, and in the top left click on export and save it to your folder.

What to note: Make a note in your audit to fix all errors with either redirects or the correct urls.

Next scroll down to “Page Titles”, and export the missing ones. Do the same with the meta descriptions, the H1’s, Directives -> No canonicals, Analytics -> No GA Data, and Search Console -> No GSC Data.

What to note: Mark down that x many pages are missing key on page elements, and include the combined list of the pages with elements missing into the audit.

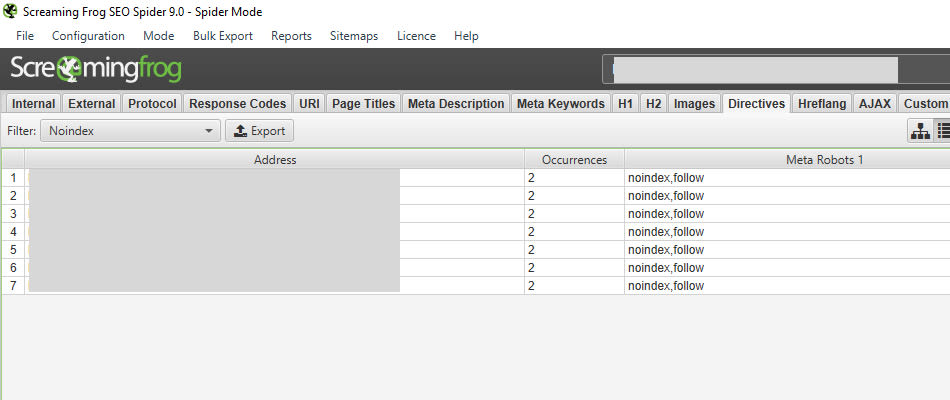

Next go to “Directives” again, and click on “Noindex”. Check the pages here to make sure there are no pages that should be targeting search traffic.

What to note: Mark down any noindex pages that shouldn’t be so, and recommend the tag be removed.

Faster Is Always Better (always)

The speed of your website has a massive impact on user engagement and sales, which have a direct influence on rankings based on higher bounce / serp return rate.

So it literally pays dividends to have a fast site (but you probably knew this anyway).

Simple checks, just use more than 1 tool.

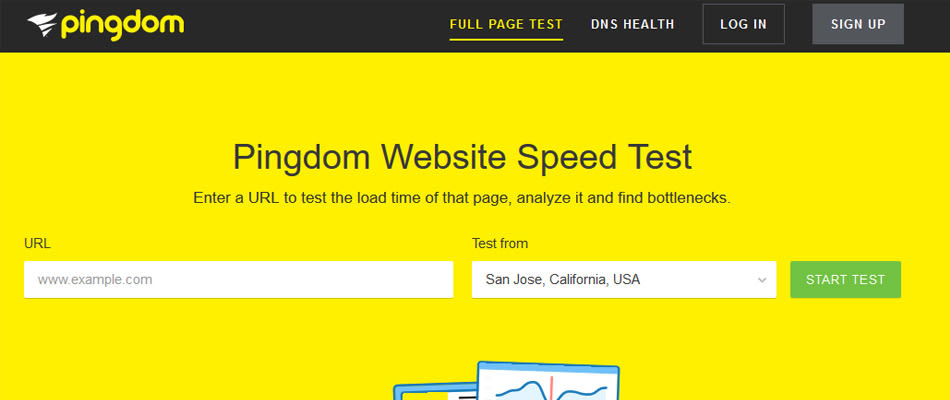

I prefer to use both Pingdom and GTmetrix (make sure to choose the location closest to your target market).

Fairly simple, you want to use both tools to test one variation of each type of page on your site. So for example if it was an ecommerce website then you would test the homepage, a category page, a product page, the checkout page and maybe an info / blog page too.

What to note: Add into a speed section any high priority issues found for each page type separately, and mark down the priority of getting each fixed.

5 Step Basic Search Console Checks

It’s a lot easier to get the info straight from the horses mouth, so to the search console we go.

Step 1: Check the index status section – does the curve of pages look like it’s going up or down? Why is this?

What to note: any decline in indexing should be analysed and noted. Has there been a site migration with lost pages? Previous duplicate content has been fixed? Find it, note it if it’s a problem.

Step 2: Sitemap errors – under the sitemaps section, do the submitted sitemaps have any errors? If so what are they and do they matter?

What to note: any significant errors in the sitemap that Google dislikes. Note them to be fixed.

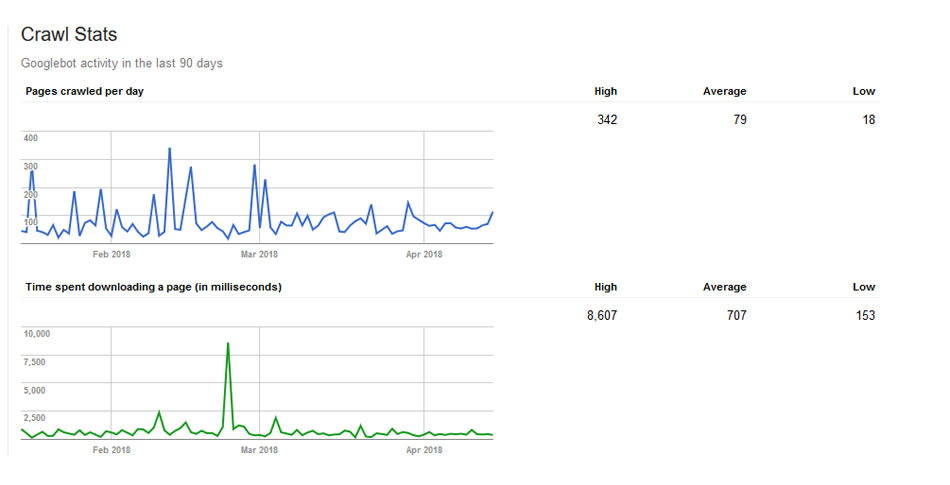

Step 3: Crawl Rates – is the amount of pages crawled per day going down? is the time taken to crawl going up? These could be an indication of slow hosting, or perhaps an upgrade / migration gone wrong.

What to note: any significant trend of downward pages per day / increase time taken should be investigated as it could be a serious problem. Note any findings with potential solutions.

Step 4: Mobile Usability – Go to Search Traffic -> Mobile Usability to see if there are any errors. If there are, check if they are different / the same page type, and whether it is site-wide on all types of individual pages.

What to note: Note any pages with errors, and find out the specific problems with each by running them through the Google mobile page tester. If they are sitewide, simply mention the part of the page template that’s causing the issue.

Step 5: Structured Data – Go to Search Appearance -> Structured Data, check to see if there are any errors, and what is causing them (in the data testing tool).

What to note: work out if the errors are site-wide or page specific, and note down the problem + solution.

Basic User Experience / Analytics Checks

Next we will dive into the Google Analytics to find out if there are any big problems to fix.

Mobile User Experience

Even a page that passes the mobile friendly test in Google can be bad for users, so it’s important to check your analytics.

We are looking for pages with a high bounce rate and low pages per session on mobile, indicating that there are potential problems.

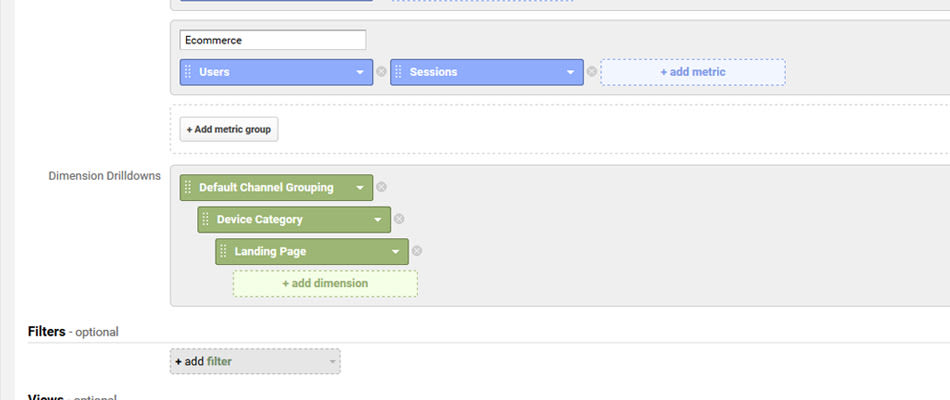

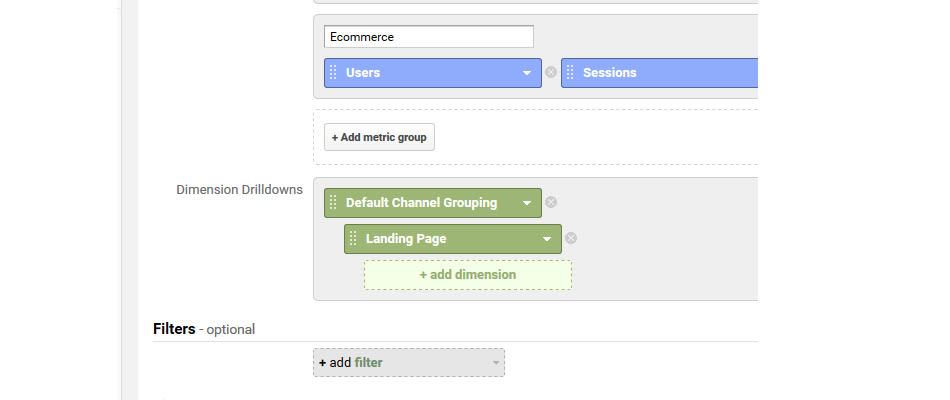

So create a custom report in Google analytics (simply go to acquisition and then click edit in the top right), and make the Dimensions match the image (Default Channel Grouping -> Device Category -> Landing Pages).

Then you want to select Organic Search, Mobile, and sort by bounce rate. Now use the advanced filter, remove landing page and add site usage -> sessions as a filter for a number that will filter out your weak pages / low priority ones.

This will leave you with your main landing pages that have the worst mobile engagement.

Next visit the page on your phone, and note down anything that you think might be annoying mobile users. Now Google the main search term for that page and compare the mobile experience of the top 3.

What to note: how does the mobile experience compare with main competitors? how is the experience annoying? Prioritise fixes for each page.

Now sort by pages per session, to find the ones with the lowest pages per session on mobile. Visit the page on mobile and think how you could increase the amount of pages they visit by linking to related content / making the next step more obvious.

What to note: note any recommendations to improve each pages per session stat.

Check for Penalties

A useful tool for checking for website penalties is called Panguin, which helps to overlay Google updates onto your analytics data to see what penalty your website may be affected by.

Basic Link Audit

I prefer using Ahrefs, as it seems to provide the quickest actionable data available.

Broken Backlinks

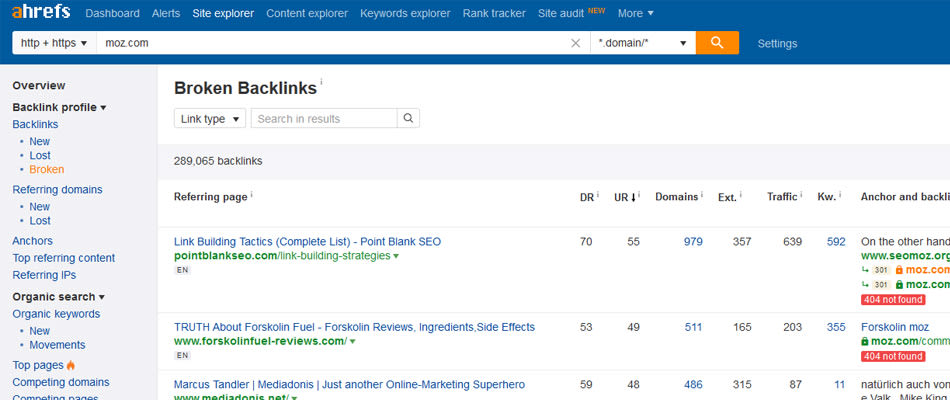

Add your domain to Ahrefs and under Backlinks click on broken. This gives you a quick list of all of the links that are currently not being used by the site, that can be redirected to give value to important pages.

What to note: any pages that have links that are 404 errors, note them to be redirected to a relevant page. Depending on the audit you can create the htaccess rules yourself and include them.

Spam / Automated Links

Go to the backlinks section, and sort by reverse DR to find the lowest quality websites linking to you. If a site is under DR 4 and it’s not niche related or receives no ranking traffic (check it in SEMrush.com), then add the domain to your disavow file.

What to note: any domains considered too spammy should be included in a disavow file to upload / add to existing rules.

Advanced Audit Checklist

Here are the extra steps you should take during an in-depth audit to ensure no stone is left unturned. Note this will inflate the amount of time it takes, but also make your analysis have much greater depth.

Identify Cannibal Pages And Recommend Correct Fixes

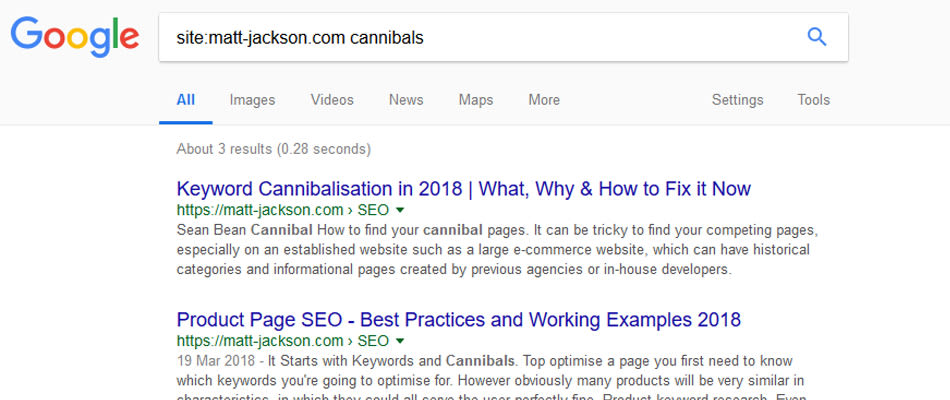

Now I have an in-depth article on this topic, but for a short hand version:

- If you have more than 1 page targeting the same keyword, Google is confused about which one to rank.

- As a result of this it tries to spread out the rankings between the two.

- This makes the two pages rank worse compared to if they were combined into one.

Steps to fix this:

- Identify cannibal pages by doing a search for “site:domain.com keyword” without.

- Look for pages that have the whole keyword in the tite, url or H1, as these are the main indicators.

- In screaming frog, find your cannibal pages and note the internal links + anchor text.

- Now you either want to de-optimise your cannibal page (done by removing the exact keyword from the elements mentioned, and removing it from the anchor text of internal links) OR by 301 redirecting the page to the main target page and changing all internal links to the new location.

This process can be done for all of the top landing page topics, based upon how much time you wish to take.

What to note: Create a sheet on a spreadsheet for each landing page, listing each cannibal page and whether it should be de-optimised or redirected.

Demographic Matches Sales Proposition

Whilst this would be considered general marketing practice, as the miss-match creates worse bounce rates on the site it’s an important thing to consider for SEO.

This may involve asking the company owner / representative some questions, but you want to figure out:

- What are the key demographic profiles of your target customers (age, gender, living situation, aspirations, education, employment, location, etc).

- What are the key solutions or benefits that your product / service provides for each demo-profile.

Now the next step requires a bit of empathy.

Imagine you’re the target demographic in question. Go and click around on related sites they would visit, who they would follow on social, other product / service websites that they may visit around your market, and THEN visit your website.

Does it “fit”? Is something “not quite right”? Am I “sold”? What’s “missing in comparison”?

It could be related to the colours, the branding, the wording, the layout of the page, the headline, title, benefits in the copy.

This can also be done via surveys, video customers on your website, and interviewing people (if they have a physical location). It can create some invaluable insights that will increase your conversions and reduce your bounce rate (and so improve search rankings).

What to note: Although this is a bit grey, if you have gained any unique insights then include it here to be used as a test for engagement and conversions.

Google Search Console Quick CTR Wins

This tactic is mentioned in various places across the web, and it’s a great way to make some fast ranking increases that can turn into conversions.

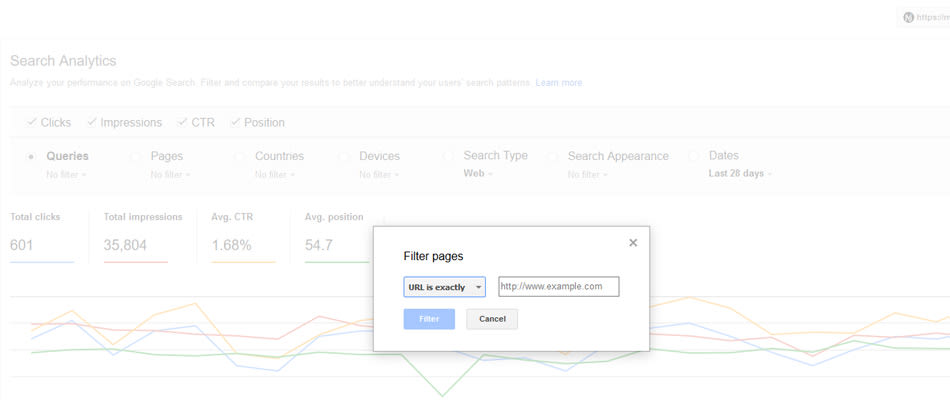

Go to the Search Analytics part of Google Search Console, under Pages, click on the arrow and click filter pages. Change the box to URL is exactly, and paste in your top landing page. You can change the date range to the past 90 days to get some more data if required.

Sort the list of keywords by impressions, and then download the table (button in the bottom left corner).

Now in Excel, filter the position to be less than or equal to 10.

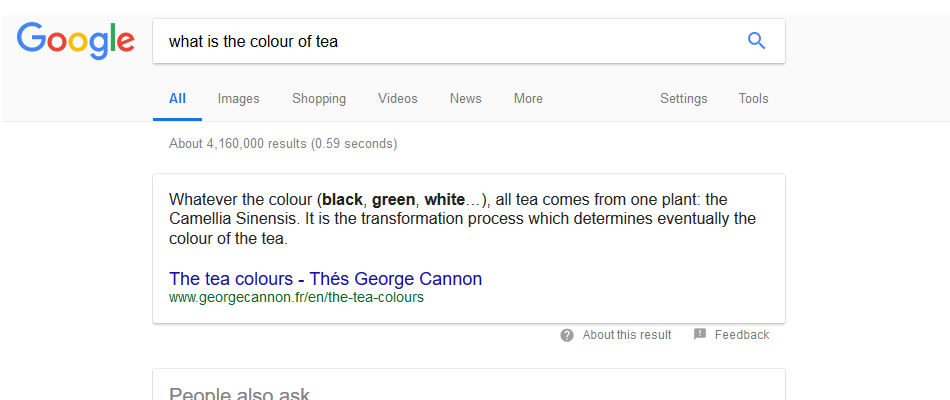

Now you have a list of the keywords where the page is ranking on the first page, and you want to find the keywords where it receives a low CTR. Next Google each poor CTR phrase and try to work out why your listing isn’t getting clicked in comparison with your competitors.

Note you should also visit each page to see if it actually satisfies this other keyword, and if it doesn’t then note changes to the landing page that would fix this.

Do this for as many top landing pages as you require / have time for.

What to note: For each page create a recommended edit to the Google snippet and landing page and recommend it to be tested to see if the CTR goes up.

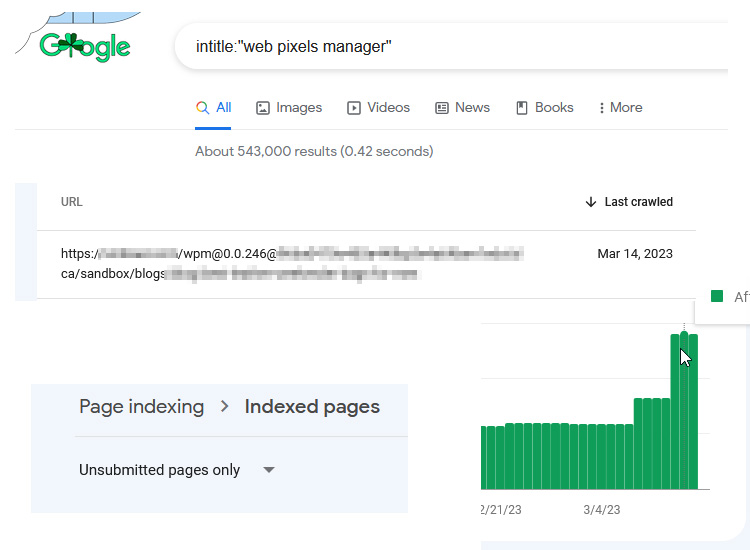

0 Value Landing Pages

Google doesn’t want to index pages that no-one is searching for. They find it hard enough to store the index data as it is, so they’re making every effort to penalise people who waste it.

Pages that are not targeting a keyword should either be set to noindex if they provide internal / referral marketing benefits, or deleted and redirected if they do not serve any purpose.

These are often similar to cannibal pages, but can also just be legacy / news content.

Here are the steps to take:

- To find these pages, so a custom Analytics report for Organic Search -> Landing Pages, and then use an advanced filter to find pages with low traffic from search in the last year.

- Next you want to add these into the Search Analytics part of Google search console as the page filter again, to see if they are in the top 100 for any keywords (alternatively you can paste them into SEMrush).

If the page doesn’t receive any landing page traffic, and doesn’t rank for many / any keywords, then ask yourself:

“is this page targeting a keyword that we aren’t already focusing a main page towards?

If the answer is yes, then it should probably be kept to improve the quality and keyword depth so that it can rank better.

If the answer is no, then the page should be deleted and 301 redirected to a related page.

What to note: Make a list of low value pages, and add whether or not it should be improved, noindexed, or deleted and redirected.

Featured Snippet Opportunities

There are plenty of ways to optimise for a featured snippet (this blog post by Ann Smarty has some great examples), but to find opportunities we need to look in the Google results and Search Console.

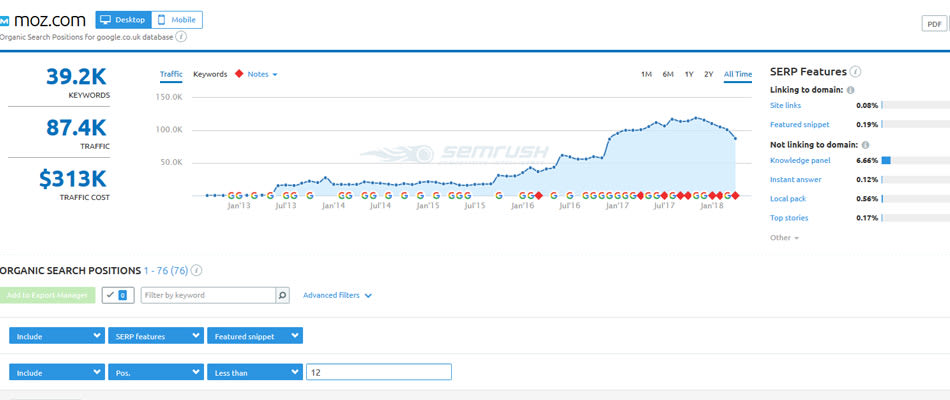

The quickest way to identify your featured snippet opportunities is with SEMrush.

Simply go to your website traffic keywords, filter by positions less than 12, and click on the featured snippets link (top right of graph). There you have all of your opportunities to win a snippet through better optimisation.

Another way to find opportunities is using manual work + Search Console data.

Take your list of quick CTR wins (see previous section), and look for informational based content. Next Google phrases around the main keywords (using related searches, answer box questions, etc) to see which keyword topics product a featured snippet.

These will be your targets.

What to note: For each landing page, see which keywords product featured snippets and what form those snippets are in. Make suggestions of how they could optimise / add to the landing page to win the featured snippet from someone.

Keyword Gaps On Top Content

There are often examples where even a well optimised page has missed out on keyword opportunities because of a lack of thorough research.

I’ve done a thorough guide on proper keyword research that you can read here.

The main steps involved:

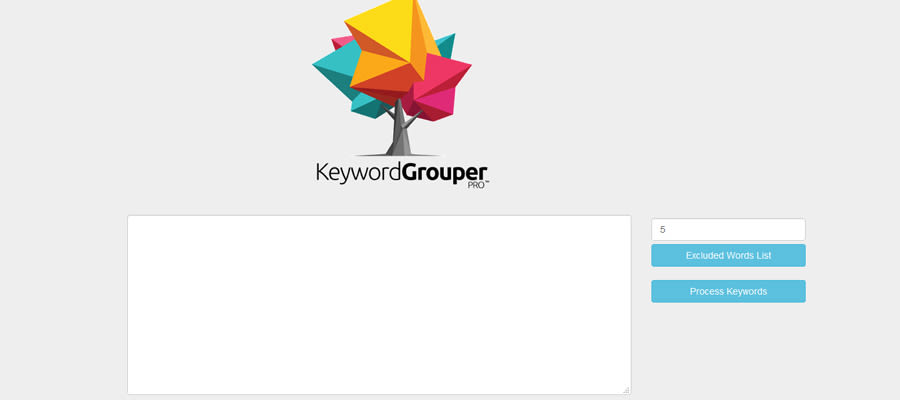

- Exporting keyword lists from both SEMrush and Ahrefs

- Combining them into categories by using Keyword Grouper

- Generating additional FAQ’s by using AnswerThePublic

- Then working out the user intents based upon what already ranks in the top 3 results in Google, and fitting the keywords into sections of user intent.

What to note: After performing this research for each main page, note the keyword gaps and recommendations to change on the landing page and meta information.

Site Specific Things To Always Audit

There are certain aspects of an audit that will differ depending on the type of website, so here are some specific examples below.

Local SEO Audits

The key things to check in the local audit (in addition to the above) are:

- Citation consistency – your listings should contain correct information everywhere.

- Citation gaps – you should have at least as many citations from the same sources as your top competition.

- Schema.org markup – you should have location specific markup on your main page, and it should be niche specific if there is one for your business (such as plumber).

What to note: Write down any areas where the website is lacking, include citations to change / build, and the schema.org code to include in JSON LD format if possible.

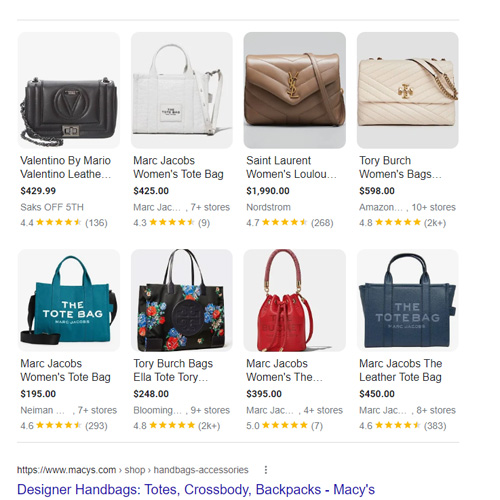

Ecommerce SEO Audits

I have plenty of examples of mini-ecommerce audits here, and there are always some key areas that repeat themselves:

- Incorrect use of filters – often CMS’s will create new pages for filters with duplicated content on them instead of just canonicalling to the main page. You can also find instances of infinite urls based upon filters, where a robot can get lost in your site by following endless links.

- Product Page Schema.org – this helps to provide snippets in the search results and increase CTR (for more info see my rich snippet guide, or my product page SEO guide).

What to note: Check to make sure filters have the correct canonicals, and that schema.org is present and validated. Note any issues that need fixing.

Affiliate / News SEO Audits

The main one here is the inclusion of duplicate / thin / no keyword value in the index (as mentioned above in the index bloat section).

News sites especially should keep checking their analytics to remove dead pages and keep their index search relevant.

Affiliate sites can often experience cannibalisation by trying to dive too granular with their content, so making sure to structure your site correctly to pass value to the parent topic is important.

SEO Audit Examples

Here are some examples of small website audits that I’ve published online. They only show a fraction of this template in action, but are a good way to get to grips with performing site audits.

Download SEO Audit Checklist PDF

If you want to be able to tick these off as you go, I created a quick reference PDF to use in your day to day auditing.

You Made it!

That was a bit of an epic post if I do say so myself, and well done for reading all the way through (assuming you didn’t just scroll to the end!).

Is there anything that confused you? Ask a question in the comments.

Anything missing in the post? Email it to me: info@matt-jackson.com

Sounds too much like hard work? I offer paid audits based on this methodology. Email me to get started: info@matt-jackson.com

Hi,

were is the price for SEO audit?

Hi Ciprian, you can learn more about my audit services here: https://matt-jackson.com/seo-consulting-services/seo-audit-service/