Last Updated on November 26, 2019

One of the most common and harmful on-site SEO mistakes that I notice on a regular basis is keyword cannibalisation (or cannibalization with a z).

This tutorial will outline what keyword cannibalism is, why it’s a problem, and how you can fix it on your website.

2018 Warning / Update: I’ve added an update post (open in a new tab for reading this post) to ensure that you don’t completely go the other way and ignore the long tail keywords.

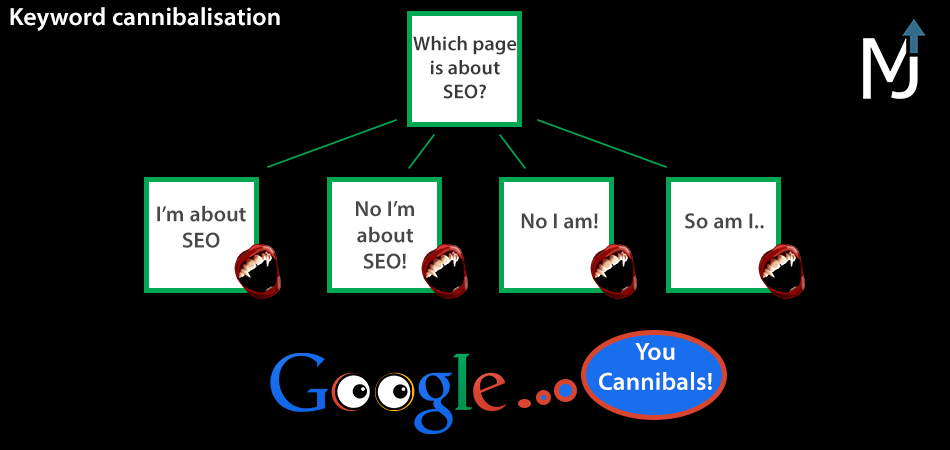

What is keyword cannibalisation?

Keyword cannibalisation or cannibalization occurs when multiple pages on the same website are targeting the same keyword or group of keywords.

This results in lower Google rankings for the collection of pages as a whole, than if you had one page targeting the phrase or group, hence the name cannibalisation (the website eats its own rankings away).

Why is keyword cannibalization a problem?

The main problems that occur are:

- Diluted SEO Effectiveness – your internal link equity is spread across every competing page, but only one will end up ranking for the terms.

- Lower Conversions – often the page you did not intend to rank will rank for your keyword, where the content could be less well optimised for your desired conversion action. This means the user isn’t satisfied, resulting in a higher bounce rate, and lower rankings all together!

- Wasted Crawl Time – Google has a finite amount of dedicated time for your website, and wasting it on competing pages makes it less likely to crawl your higher priority pages.

Fixing keyword cannibalization is part of my SEO audit strategy.

How has keyword cannibalisation changed in 2018?

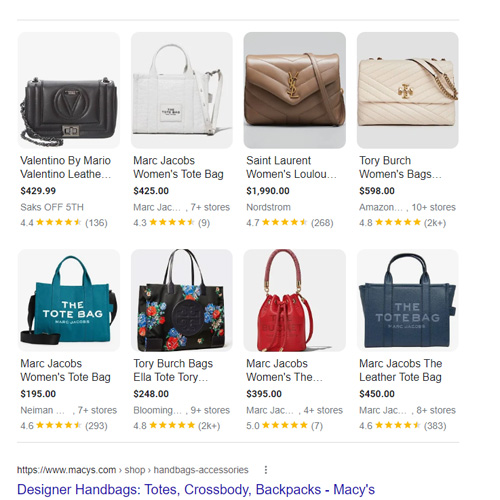

Google is trending towards ranking less pages for more keywords, and with the introduction of rankbrain, they can test whether this is better or worse for the user.

They now prefer to rank larger pages that target multiple phrases at once, and have really good user experience signals for all queries, whether that’s a long tail keyword or short tail keyword.

This means that even though it might not be an obvious cannibal page, it may still be competing because Google wants to rank a main category page there instead of a specific page.

How to find your cannibal pages

It can be tricky to find your competing pages, especially on an established website such as a large e-commerce website, which can have historical categories and informational pages created by previous agencies or in-house developers.

The best route forward is to Google your keyword phrases, and see whether the top 4 results are a specific page, or a top level general page ranking there.

From here you can map out how your site should be optimised, with the correct keywords connected to the right type of page.

This may seem a little complicated, so let’s look at some examples.

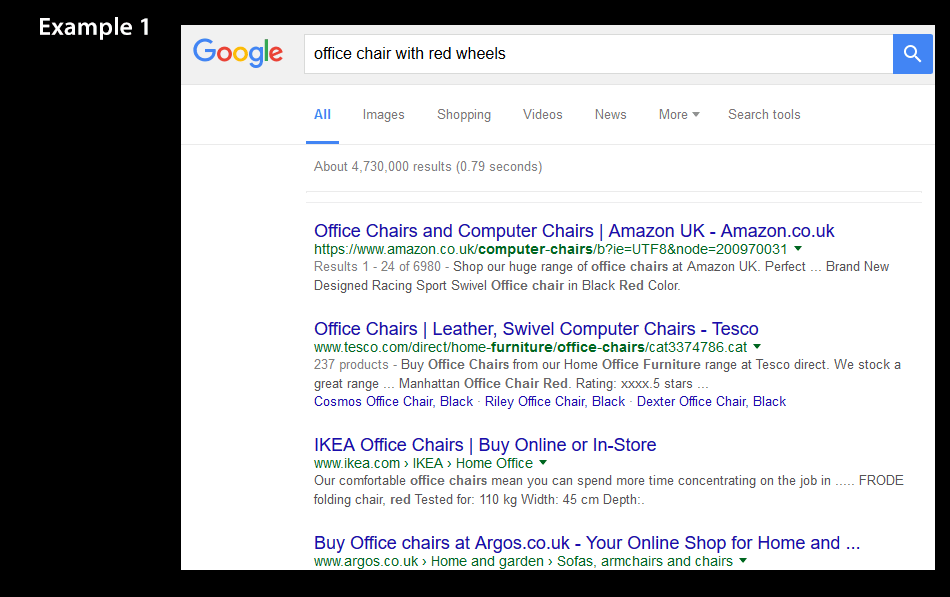

Example 1: A Preference Modifier

This is an example of how Google ranks general main category pages for specific keywords.

Some may consider this a long-tail to be targeted with a product page, but as we can see Google believes the user wants to see any red office chair, as opposed to one with specifically red wheels.

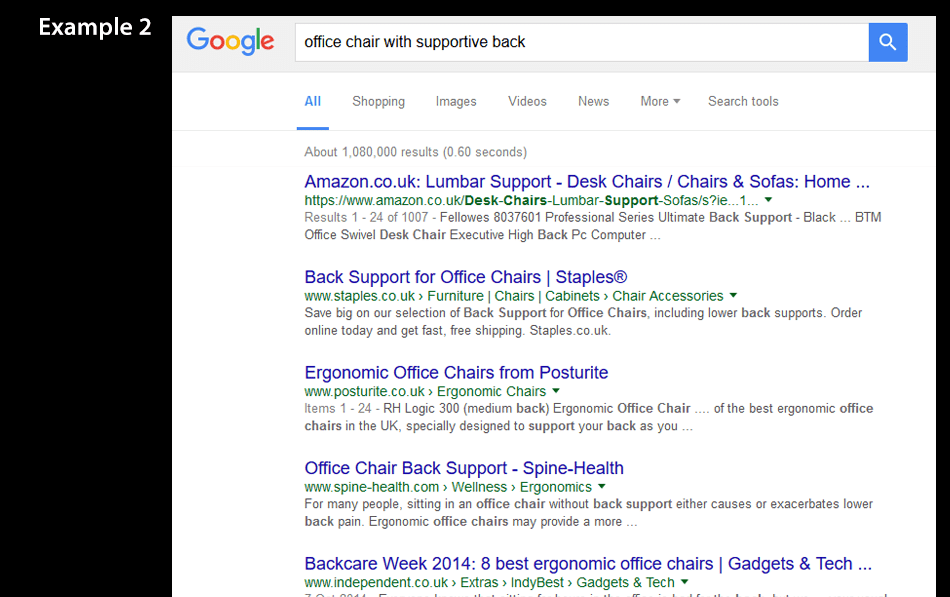

Example 2: A Specific Requirement

This example shows a case where Google is displaying specific results for this query.

They have most likely split tested general and specific results, and decided that users were more satisfied with specific pages about supportive office chairs, therefore that is the type of page you want to target for that query.

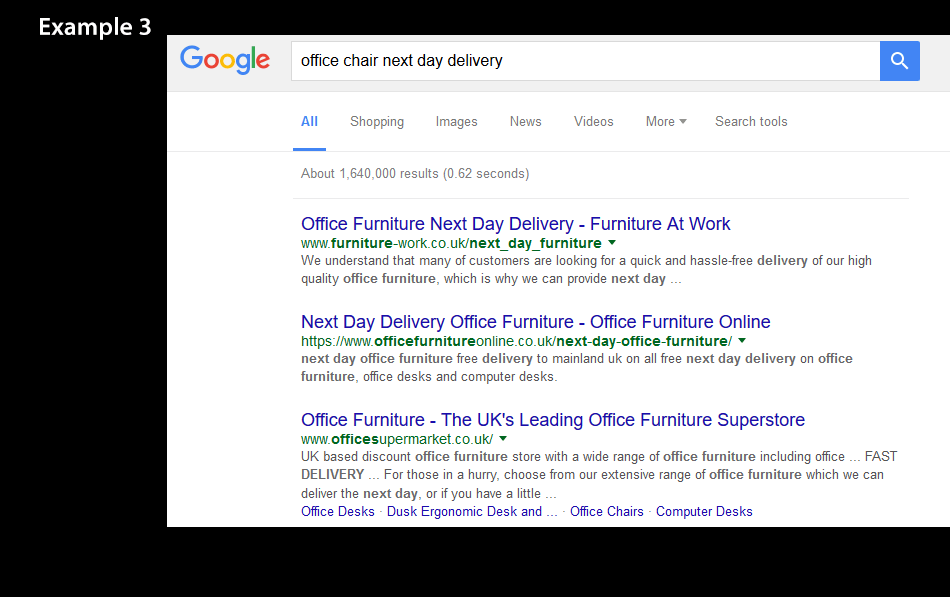

Example 3: Commercial Modifiers

This is an example of how Google moves higher up the topic when dealing with commercial modifiers, and is something I’ve noticed across multiple industries.

Once you add commercial modifiers such as “buy, online, next day delivery, etc” to your query, you get presented with a page dedicated to commercial activities, which is often at a higher level than your query.

In our example above, the “office chair” query returns “office furniture” pages.

This is why starting at the SERPs is the best way to begin your website structure mapping process.

Mapping Your Structure

So for each of your keyword phrases, you want to create groups of queries based around users intent.

Then after you have your groups, you need to Google each keyword to see which type of page is brought back.

Then you can decide whether a keyword fits into a specific landing page, or as part of a master page.

You can learn more about how to structure your ecommerce urls here.

Finding The Cannibals

After you have mapped out your ideal site structure, you can identify your current cannibal pages.

There are several ways we can identify competing pages, and it’s best to use all of these to find every single one of your cannibals!

- site:domain.com inurl:keyword – using this operator in a Google search can help us find every page on our website which has this keyword in the url. The url is often optimised with the keyword and can help us find potential duplicate pages.

- Visit your sitemap, and do a ctrl + f for your keyword – this will help find all the pages within your sitemap targeting your keyword, helping you find old pages buried within your site that you may not have known about before.

- SEMrush keyword data (visit SEMrush) – this will give you a list of keywords with the page urls that rank for those keywords. It can help identify where you may have the wrong urls ranking for your keywords, which can be the result of cannibalisation. Simply sort by keyword to identify duplicates. This could also be done using the Ahrefs traffic report.

- site:domain.com intitle:keyword – similar to the first operator, this will show each page with our chosen keyword in the Title.

- Ahrefs have produced a Google sheet where you can export your rankings into it and it will automatically find the cannibals in it (see it here) which you may find helpful.

Doing this for each keyword should easily bring back your problem pages.

How to fix your cannibal pages

First we need to decide if the pages are serving any benefit to the users on our website. From here we can then create two categories:

- Pages that are useful, and require de-optimisation.

- Pages that aren’t useful, and require redirection.

De-Optimising Pages

This basically means you want to make these pages less relevant to your cannibal query, by removing the keyword & synonyms from the key areas causing cannibalisation issues.

These are connected to your on-page factors, your on-site factors, and your external factors.

The on-page factors to de-optimise are:

- Page Title

- Meta description

- Url (remember to redirect)

- H1, H2, H3

- Image alt text

On-page factors to optimise are:

- Add exact match internal links in the main body of content from your cannibal page back to the main page.

The on-site factors to de-optimise are:

- Anchor text of internal links

The off-site factors to de-optimise are:

- Inbound link anchor text

Important Note on Redirecting:

You often will not have control over your inbound linking anchor text, and therefore for a heavily optimised page that you want to keep for users, it may be better to redirect the page to your main page for that keyword, and create a new url entirely.

Redirecting Pages

For large websites this can become a nightmare, with a htaccess file longer than most peoples PHD.

However it is well worth it in the long run, as removing the cannibalisation will leave you with higher rankings for the entire site.

You want to use the 301 redirect rule, to set the page as permanently moved to the new location, for example:

Redirect 301 /test-cannibal https://domain.com/main-keyword/

If you are redirecting sub-category urls as well as the main category, you must have the sub-category redirects above the main category redirect in the htaccess file for it to work, for example:

Redirect 301 /test-cannibal/sub-category https://domain.com/main-keyword/

Redirect 301 /test-cannibal https://domain.com/main-keyword/

And after you have completed this htaccess file, make sure to remove all internal links to the old pages, and remove them from any sitemap, both html and xml sitemaps.

If you end up with multiple chains of redirects it can slow down the website and the crawl rate from Google. So as you implement your new redirects, you should search to see if that url has been redirected to in the past, and if it has then replace all other redirects to it with the new page location.

I wrote a post on how to structure your ecommerce category pages that you can read here.

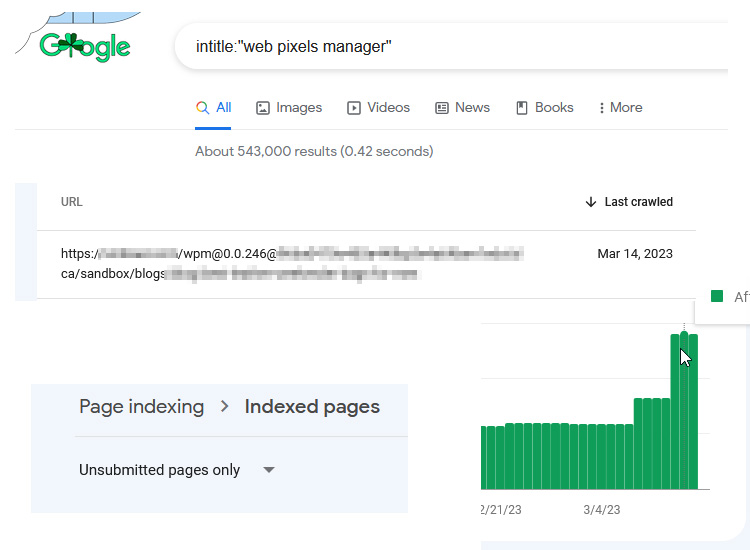

Keyword Research Process – Don’t Ignore The Longtail Completely!

It’s quite easy to get caught up in the mindset that everything is a cannibal and your website should only have a few pages, but this is still far from the truth!

There are some instances where even if no specific page ranks for a keyword it could still represent an opportunity.

One of the sure fire ways of finding out, is to check the intitle:”keyword” results in Google.

If there aren’t many pages targeting that keyword (particularly a lack of niche relevant authority websites) then it may well be a long tail keyword opportunity and not a cannibal.

And if eventually it proves to be a cannibal, you can always redirect it after you’ve tested the effectiveness of it.

You can read more about ecommerce keyword research in my guide here.

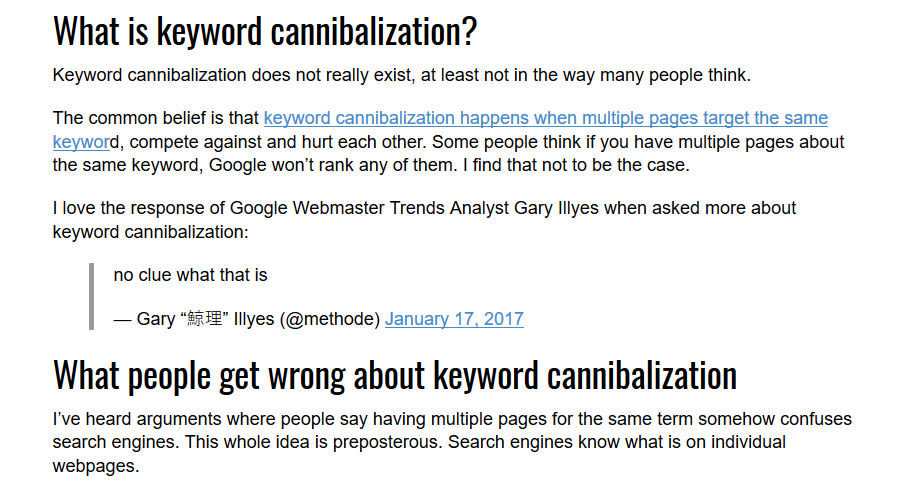

A note on Conflicting Search Engine Land Article 2018

I read this article on keyword cannibalisation on Search Engine Land, and decided to jump in.

The premise seems to be that keyword cannibalisation doesn’t exist, and the example given is Search Engine Land ranking for “technical seo“.

I could start a rant about SEL being a niche authority, or that no searcher is removing the filter, or that now in May 2018 only 1 SEL page is ranking for that term, or that the only reason it ranks for the head term is because it satisfies a sub-query “technical seo checklist”.

But I don’t need to do that.

That’s because the conclusion of the article contradicts the title, and admits the problem + shows the solution:

“As long as the intent is the same and the content is similar, I’d typically go for fewer, stronger pages.”

“When you have multiple pages for a head term such as “technical SEO,” you may be splitting equity trying to decide which page to link to; in this case, consolidation may be best.”

Well with a revenue model based upon page views and advertising, online newspapers have to jump on trends and artificially gain clicks.

Ahrefs have also jumped on the band wagon quoting the article, using the “Google is smart” [and knows the pages user intent”.

Well obviously a cannibal page is precursored on the fact that both pages are trying to serve the same user intent, otherwise it wouldn’t be a cannibal, it would simply be a long tail article aimed at a keyword variation, as explained with the Rank Brain split testing, if it’s ranking, Google thinks it serves the same intent (or sub-intent).

And if it started to cannibalize the head term, it could be fixed with proper site structure and internal linking to keep the main page as a priority.

So keep on fixing your cannibals, and take SEO news headlines with plenty of salt.

Conclusion

There you have it, the complete guide to sorting out your pesky cannibalization issues.

Some have said recently that cannibalisation is a historical problem from a by-gone era, but I have to politely disagree.

With Google‘s trend towards ranking larger pages that satisfy a broader user intent, cannibalization is actually more common now than it ever was.

The key is to always start from the SERPs, and work backwards, as this shows what Rankbrain already considers to be the ideal solution for the user.

If you would like any advice or help on this, you can leave a comment or email me: info@matt-jackson.com

Here is an older video of me discussing the problem of keyword cannibalisation:

Matt, Just watched your semrush video and can’t thank you enough. That simple spreadsheet works wonders! My sites are less of a mess thanks to you, but still works in progress because fixing takes time. Prevention works wonders though.

Thanks

You’re welcome Steve, glad I could help.

Good article thanls

Great Article. Currently I am doing the same for News articles. Does it really have an impact on SEO?

Hi Kunal,

Yes it does have an impact if that’s the problem you’re facing.