Last Updated on September 13, 2022

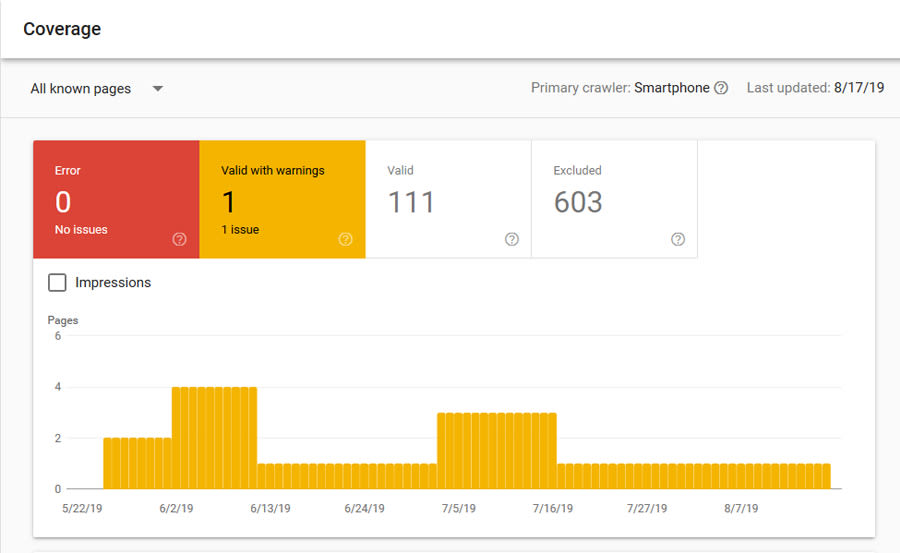

The yellow errors in the Coverage section of Google Search Console continue!

This time I’ll cover how to find and fix the “Submitted URL Blocked by Robots.txt” issue.

Why is this an error?

This is not just an error, it can potentially be a HUGE SEO issue, and is one to fix immediately.

The robots.txt file tells Google how to behave when crawling your website.

It is usually located at: domain.com/robots.txt

You will usually have to edit it via FTP or File Manager via your web hosting.

If Google sees a page as being “blocked” this means it’s not allowed to crawl it, and when Google can’t crawl something, it will often REMOVE IT FROM THE INDEX COMPLETELY.

Now this isn’t such as problem for a filter page that you don’t want indexed, but if it’s your main category or landing page, then you’ve got a big problem.

The main reason why this error occurs, is because you have a page in your sitemap, that is being blocked, which means you’re telling Google two conflicting things:

- Here is my Sitemap, Google please index all of these pages.

- Here is my robots.txt file, please don’t crawl pages like these.

And if you have this error, it means one or more of your pages is showing up in both of these files.

How to Find and Fix The Error

Step 1 is to make sure these pages are supposed to be blocked.

This error can often identify when someone has blocked something by accident, and can help you fix this before it causes you some big SEO headaches.

If the pages aren’t supposed to be blocked, then analyse your robots.txt file rules, find the one blocking your main page / pages, and rewrite the rule so it doesn’t block the pages (but still blocks what it was trying to block in the first place).

Step 2 – if the pages are blocked on purpose – remove them from the sitemap.

The way to remove pages from the sitemap varies on different CMS, but here are some examples:

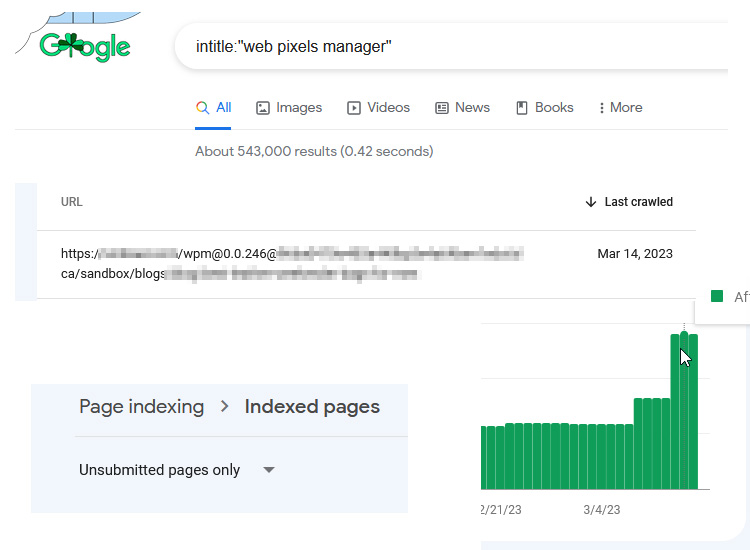

- WordPress – the Yoast plugin will usually automatically do this for you in WordPress if you have edited the “Search Appearance” settings to remove certain pages from the index. Individual pages can also be removed following these instructions: https://kb.yoast.com/kb/sitemap-shows-excluded-posts-pages/

- Shopify – There’s a fantastic App in Shopify called the “Sitemap & Noindex Manager” that makes removing urls from the Sitemap (as well as adding noindex to certain pages) really easy: https://apps.shopify.com/sitemap-noindex-manager

- Opencart – it’s not the easiest to do in Opencart, but it can be done. The “Advanced Sitemap Generator” from Geckodev is my preferred way, as it allows you to manually remove types or specific urls from the sitemap in Opencart: https://www.opencart.com/index.php?route=marketplace/extension/info&extension_id=32577

Need A Hand? My Audits Can Help

Analysing and fixing Google Search Console errors is a small part of my SEO Audit & Opportunity Report service, which will help you to identify any technical, on page, user experience, and off site SEO problems and potential missed opportunities.

Contact me via email for more information – info@matt-jackson.com

Hi, this have been quite informative. Is there any free extension you recommened we can use in opencart to submit sitemap or there is any other way to submit sitemap. I tried the direct way to submit sitemap to google webmaster but when i am submitting the url, webmaster shows “counld not fetch error” any suggestions? Also please suggest the robots.txt for opencart ecommerce site.

Hi Shivangi,

If you can’t submit the sitemap into Google Search Console then I would think something is pretty wrong here.

You can check your robots.txt file to ensure you aren’t blocking Google completely, then ensure your sitemap file is reachable (you may need a developer to fix this).

My robot.txt file is ok. there is no issue. But till now my site has not ranked on google 100 pages. My SEO is running till now since last 6 months.

Hi Hasan,

Your site is indexed correctly from what I can see, so it’s likely not the reason for you not ranking. New sites do tend to take longer to rank for a whole host of reasons, so you could look into adjusting your keyword targeting based on your authority.

This is truly helpful